ABOUT THIS FEED

Machine Learning Mastery, founded by Dr. Jason Brownlee, is a blog focused on teaching machine learning and AI through hands-on, practical tutorials. Its RSS feed delivers step-by-step guides, coding examples, and explanations of complex algorithms in an approachable style. The content is designed for learners at all levels, with special attention to those transitioning from theory to practice. Posts cover a wide range of topics, including deep learning, natural language processing, reinforcement learning, and optimization techniques. The blog emphasizes clarity and action, encouraging readers to apply concepts directly with Python and related tools. With new content appearing weekly, this feed is an excellent resource for self-learners, students, and professionals who want to sharpen their skills in applied machine learning.

Saizen Acuity

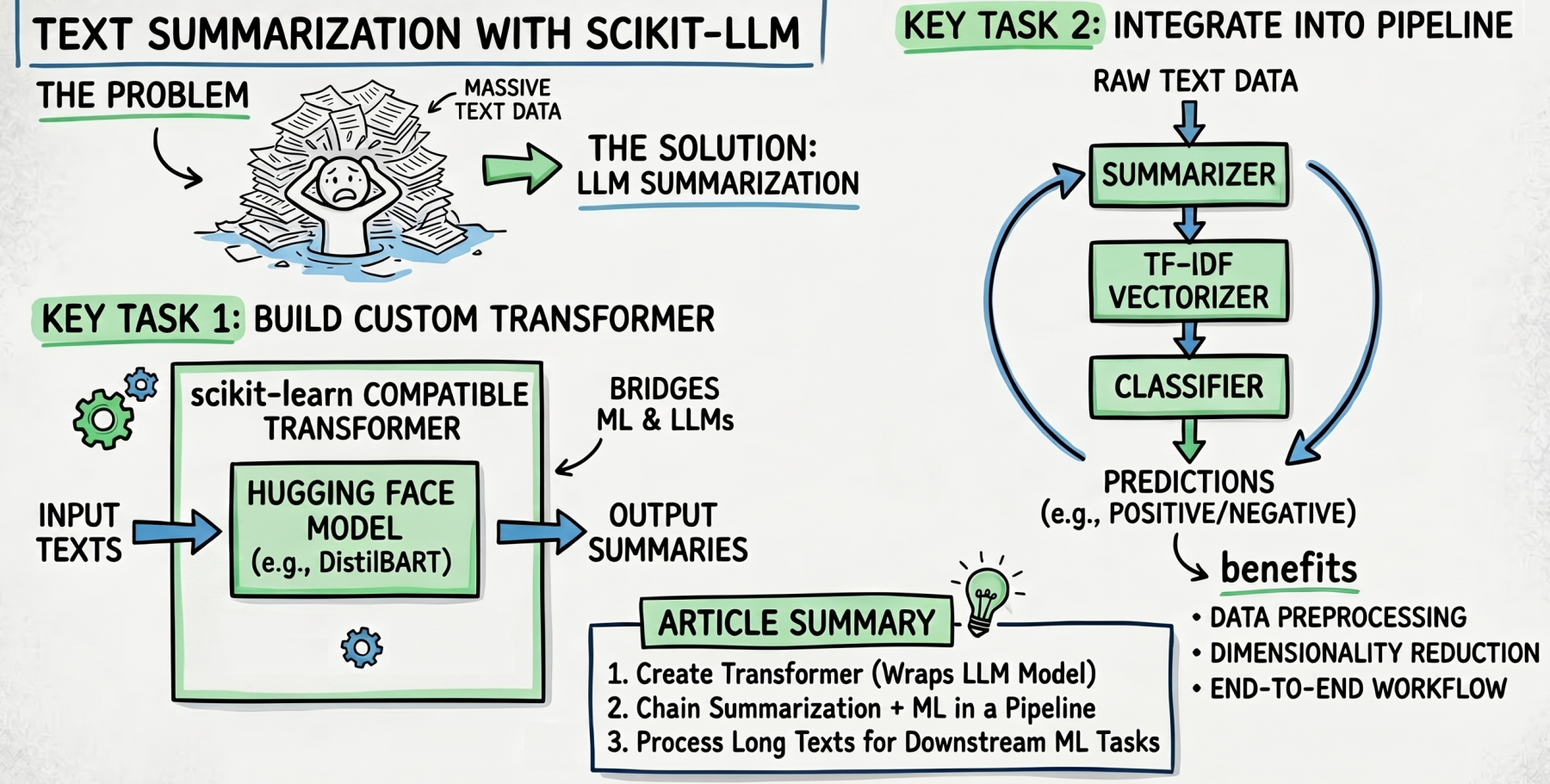

- Text Summarization with Scikit-LLM

In a

- Building AI Agents with Local Small Language Models

The idea of building your own AI agent used to feel like something only big tech companies could pull off.

- Train, Serve, and Deploy a Scikit-learn Model with FastAPI

FastAPI has become one of the most popular ways to serve machine learning models because it is lightweight, fast, and easy to use.

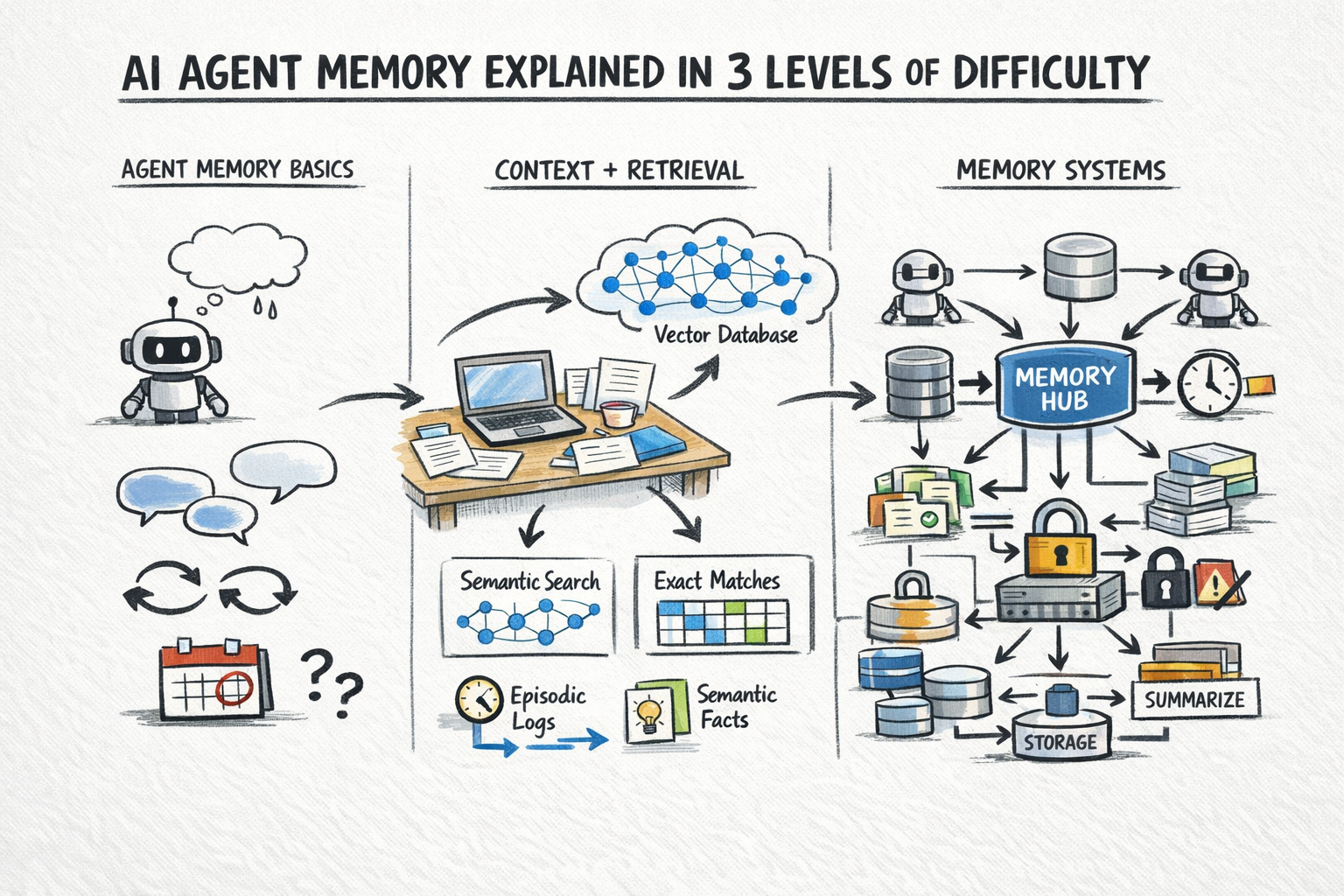

- AI Agent Memory Explained in 3 Levels of Difficulty

A stateless AI agent has no memory of previous calls.

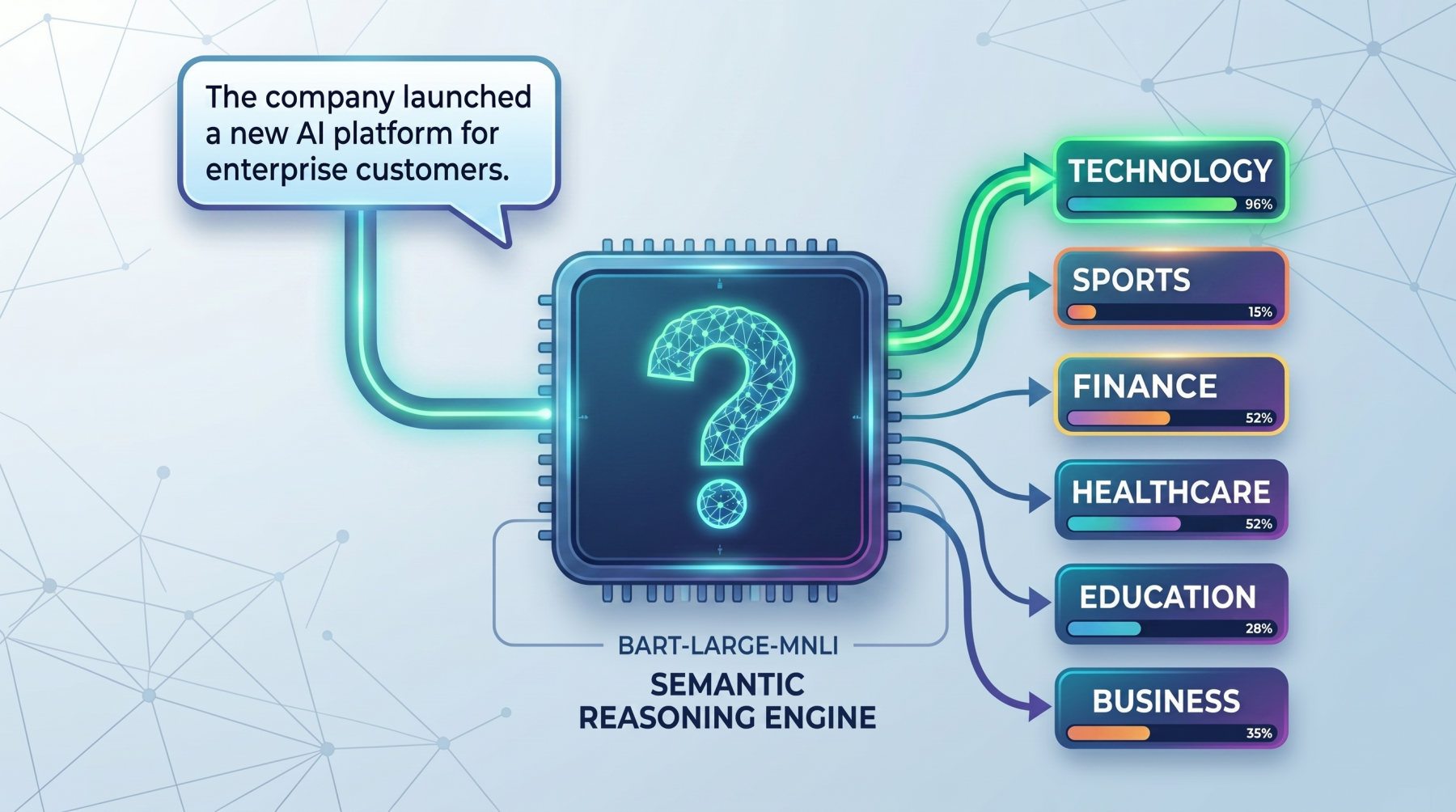

- Getting Started with Zero-Shot Text Classification

Zero-shot text classification is a way to label text without first training a classifier on your own task-specific dataset.

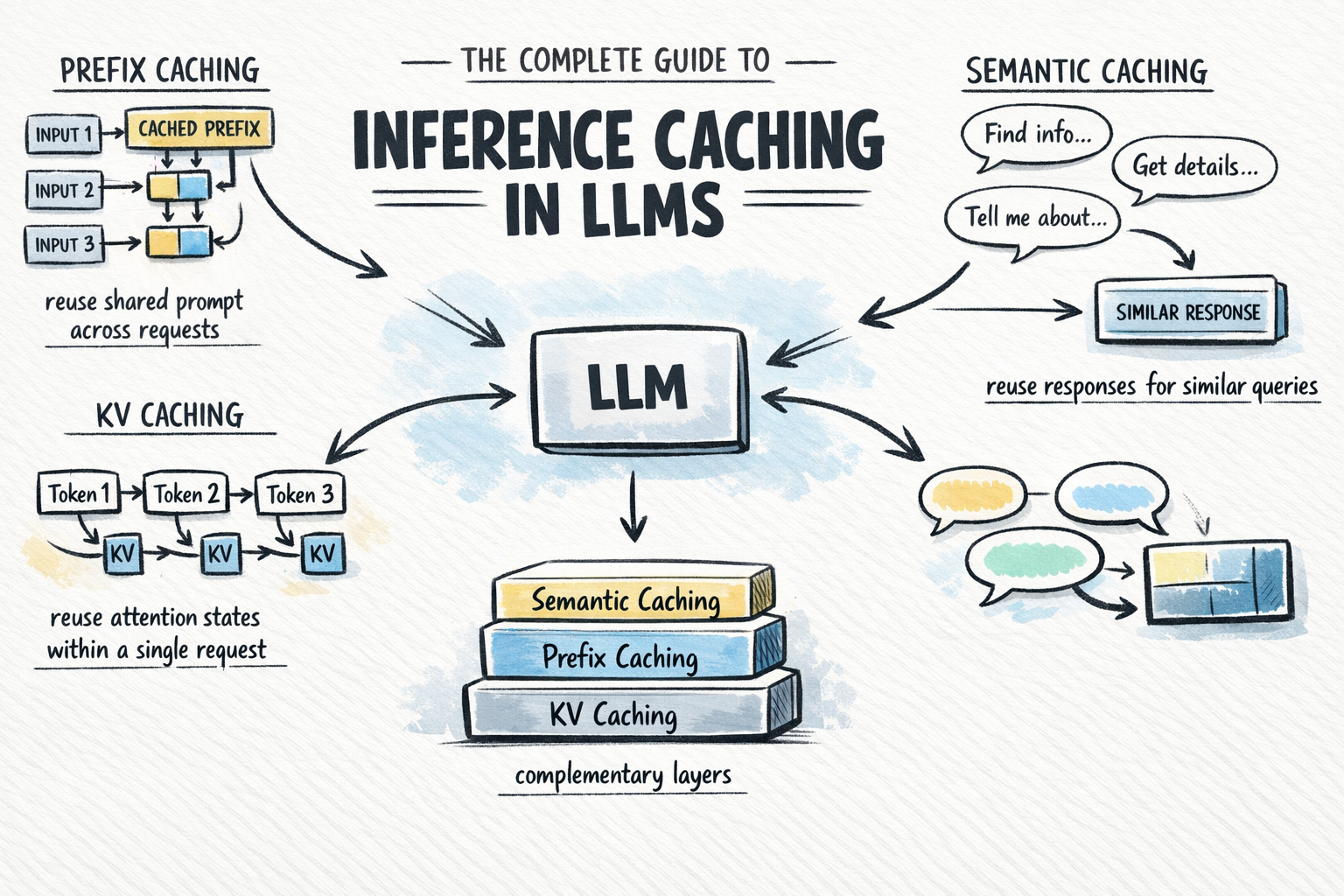

- The Complete Guide to Inference Caching in LLMs

Calling a large language model API at scale is expensive and slow.

- Python Decorators for Production Machine Learning Engineering

You've probably written a decorator or two in your Python career.

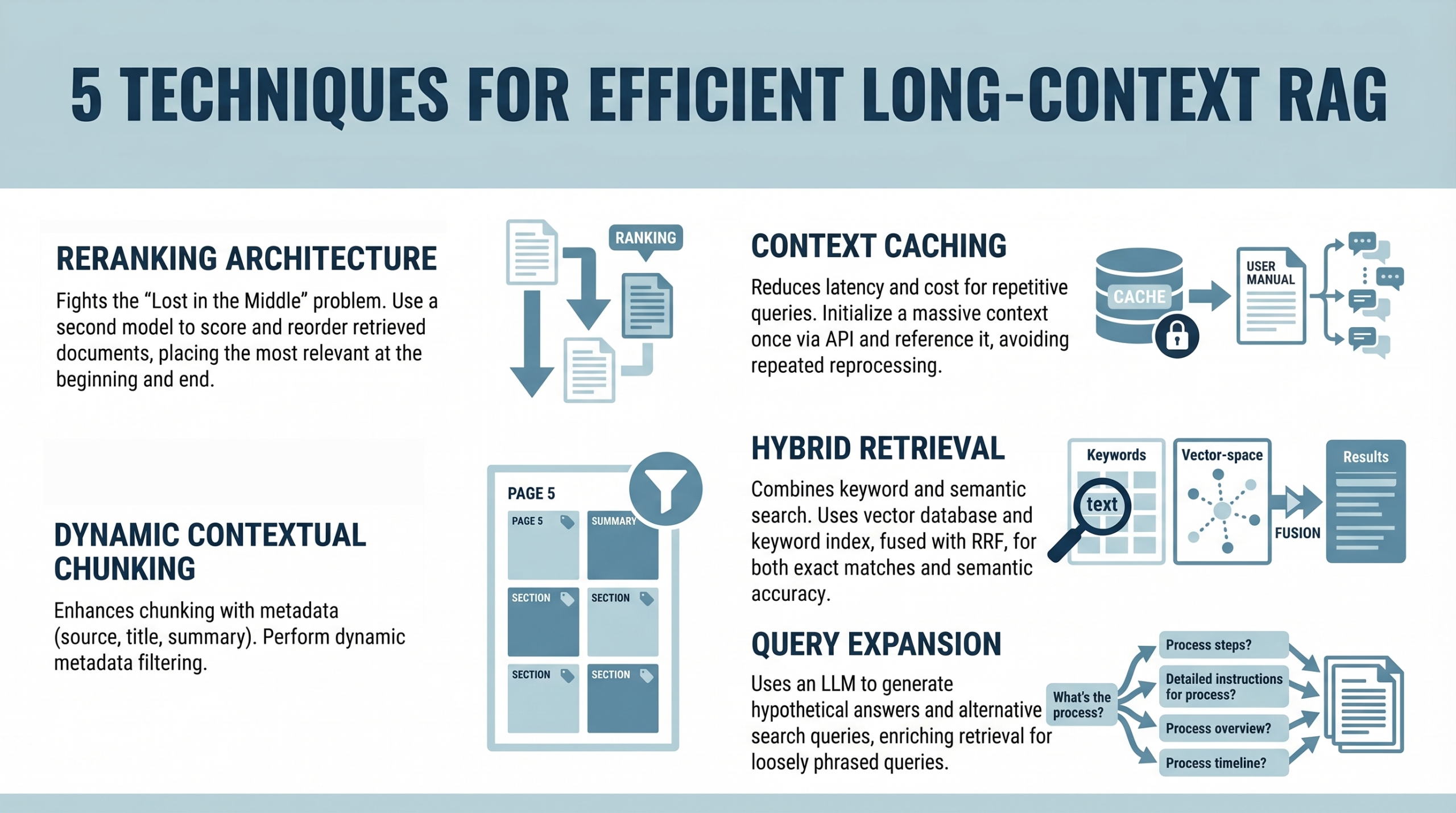

- 5 Techniques for Efficient Long-Context RAG

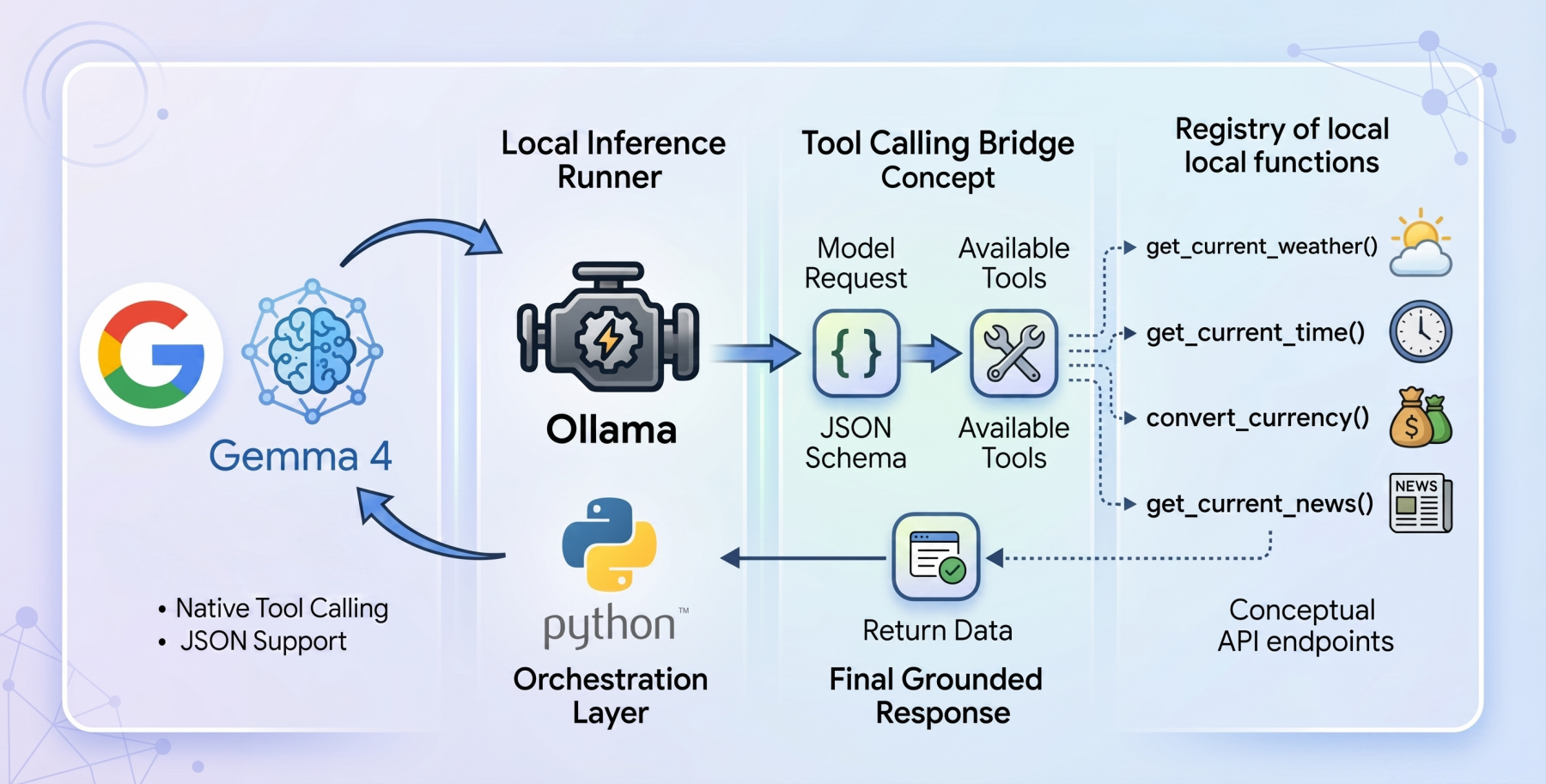

- How to Implement Tool Calling with Gemma 4 and Python

The open-weights model ecosystem shifted recently with the release of the

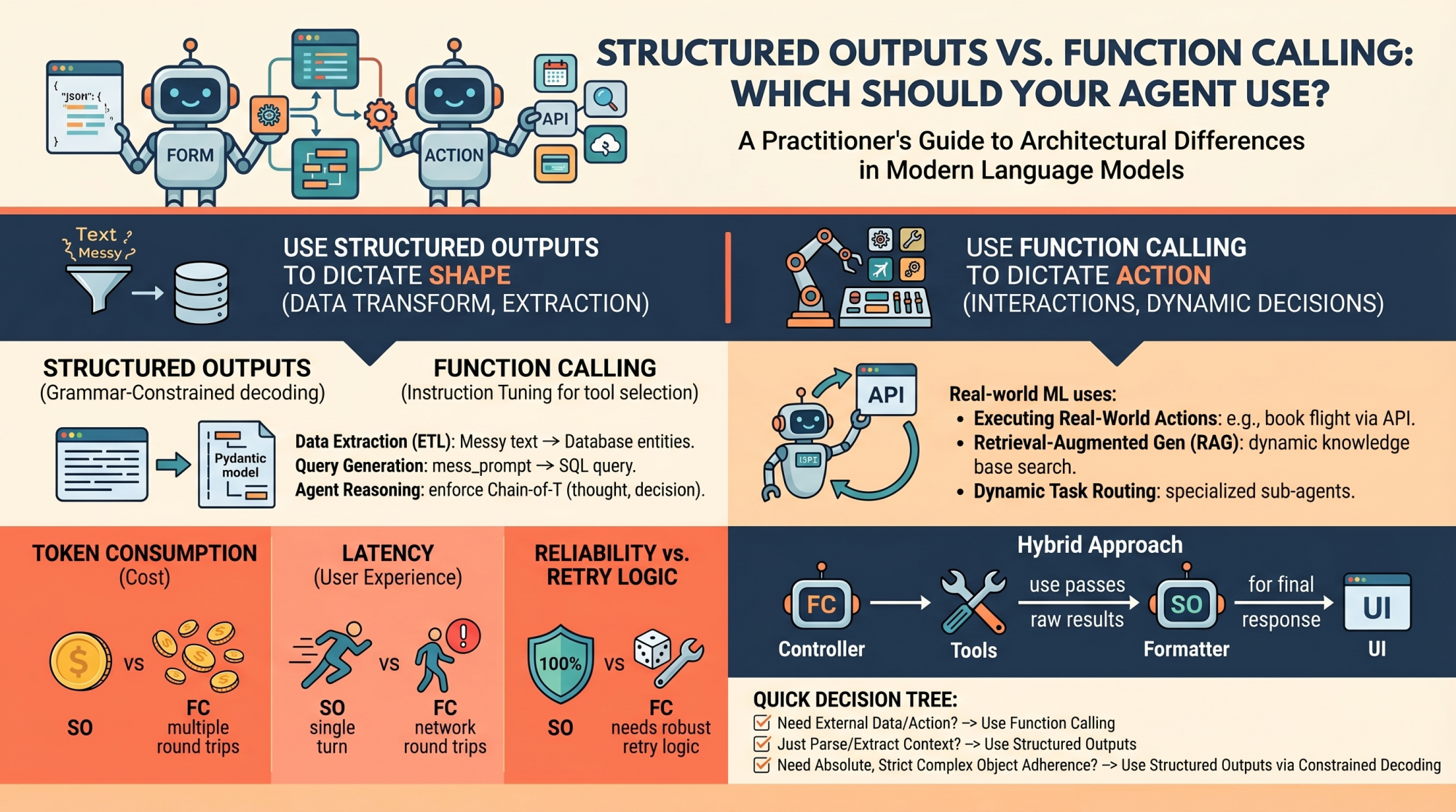

- Structured Outputs vs. Function Calling: Which Should Your Agent Use?

Language models (LMs), at their core, are text-in and text-out systems.