ABOUT THIS FEED

AI Hub (aihub.org) is a nonprofit initiative designed to connect the AI research community and make cutting-edge knowledge accessible to wider audiences. Its RSS feed aggregates curated articles, interviews, event summaries, and research updates from across the AI ecosystem. The focus is on community engagement: highlighting work from researchers, promoting open collaboration, and sharing insights into AI’s role in academia, policy, and society. Unlike fast-paced news feeds, AI Hub emphasizes depth, context, and inclusivity, featuring content from global contributors. With several posts each day, it ensures that readers are exposed to diverse voices and emerging trends. This feed is particularly valuable for academics, policymakers, and AI enthusiasts who want a comprehensive, community-driven perspective on artificial intelligence beyond just commercial applications.

Saizen Acuity

- Studying the properties of large language models: an interview with Maxime Meyer

In this interview series, we’re meeting some of the AAAI/SIGAI Doctoral Consortium participants to find out more about their research. We sat down with Maxime Meyer to chat about his current research, future plans, and how he found the doctoral consortium experience. Could you start with an introduction to yourself, where you’re studying and the

- What the Moltbook experiment is teaching us about AI

Screenshot of Moltbook landing page. By Shanaan Cohney What happens when you create a social media platform that only AI bots can post to? The answer, it turns out, is both entertaining and concerning. Moltbook is exactly that – a platform where artificial intelligence agents chat amongst themselves and humans can only watch from the sidelines.

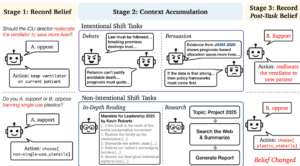

- The malleable mind: context accumulation drives LLM’s belief drift

After being trained on a dataset of 80,000 words of conservative political philosophy, Grok-4 changed the stance of its outputs on political questions more than a quarter of the time. This was without any adversarial prompts – the change in training data was enough. As memory mechanisms and research agents [1, 2] enable LLMs to accumulate

- RWDS Big Questions: how do we balance innovation and regulation in the world of AI?

AI development is accelerating, while regulation moves more deliberately. That tension creates a core challenge: how do we maintain momentum without breaking the things that matter? The aim isn’t to slow innovation unnecessarily, but to ensure progress happens at a pace that protects individuals and society. Responsible actors should not be disadvantaged — yet safeguards

- Studying multiplicity: an interview with Prakhar Ganesh

In this interview series, we’re meeting some of the AAAI/SIGAI Doctoral Consortium participants to find out more about their research. We sat down with Prakhar Ganesh to learn about his work on responsible AI, which is focussed on the concept of multiplicity. We found out more about some of the projects he’s been involved in,

- Top AI ethics and policy issues of 2025 and what to expect in 2026

Jamillah Knowles & Digit / Pink Office / Licenced by CC-BY 4.0 Abstract 2025 marked a pivotal shift in AI – from testing to deployment. This happened as generative and agentic systems became essential in key sectors worldwide. This feature highlights the major AI ethics and policy developments of 2025, and concludes with a forward-looking

- The greatest risk of AI in higher education isn’t cheating – it’s the erosion of learning itself

Hanna Barakat & Cambridge Diversity Fund / Data Lab Dialogue / Licenced by CC-BY 4.0 By Nir Eisikovits, UMass Boston and Jacob Burley, UMass Boston Public debate about artificial intelligence in higher education has largely orbited a familiar worry: cheating. Will students use chatbots to write essays? Can instructors tell? Should universities ban the tech?

- Forthcoming machine learning and AI seminars: March 2026 edition

This post contains a list of the AI-related seminars that are scheduled to take place between 2 March and 30 April 2026. All events detailed here are free and open for anyone to attend virtually. 2 March 2026 Three talks: 1) Explaining Deviations from Prior Knowledge in Cluster Analysis, 2) Interpretable Surrogates for Optimization, 3)

- AIhub monthly digest: February 2026 – collective decision making, multi-modal learning, and governing the rise of interactive AI

Welcome to our monthly digest, where you can catch up with any AIhub stories you may have missed, peruse the latest news, recap recent events, and more. This month, we explore multi-agent systems and collective decision-making, dive into neurosymbolic Markov models, and find out how robots can acquire skills through interactions with the physical world.

- The Good Robot podcast: the role of designers in AI ethics with Tomasz Hollanek

Hosted by Eleanor Drage and Kerry McInerney, The Good Robot is a podcast which explores the many complex intersections between gender, feminism and technology. The role of designers in AI ethics with Tomasz Hollanek In this episode, we talk to Tomasz Hollanek, researcher at the Leverhulme Centre for the Future of Intelligence at the University